text to speech software

Discourse amalgamation is the fake creation of human discourse. A PC framework utilized for this reason for existing is known as a discourse PC or discourse synthesizer, and can be actualized in programming or equipment items. A content to-discourse (TTS) framework changes over typical language content into discourse; different frameworks render emblematic etymological portrayals like text to speech software phonetic translations into speech.[1]

Combined discourse can be made by connecting bits of recorded discourse that are put away in a database. Frameworks vary in the size of the put away discourse units; a framework that stores telephones or diphones gives the biggest yield go, however may need clearness. For explicit use areas, the capacity of whole words or sentences takes into consideration brilliant yield. On the other hand, a synthesizer can join a model of the vocal tract and other human voice attributes to make a totally "manufactured" voice output.[2]

The nature of a discourse synthesizer is made a decision by text to speech software its likeness to the human voice and by its capacity to be seen plainly. A clear message to-discourse program permits individuals with visual impedances or perusing handicaps to tune in to composed words on a home PC. Numerous PC working frameworks have included discourse synthesizers since the mid 1990s.

A content to-discourse framework (or "motor") is made out of two parts:[3] a front-end and a back-end. The front-end has two noteworthy assignments. Initially, it changes over crude content containing images like numbers and shortened forms into what might be compared to worked out words. This procedure is regularly called content standardization, pre-preparing, or tokenization. The front-end at that point allots phonetic interpretations to each word, and partitions and denotes the content into prosodic units, similar to expressions, provisos, and sentences. The way toward allotting phonetic interpretations to words is called content to-phoneme or grapheme-to-phoneme change. Phonetic translations and prosody data together make up the emblematic etymological portrayal that is yield by the front-end. The back-end—frequently alluded to as the synthesizer—at that point changes over the emblematic semantic portrayal into sound. In specific frameworks, this part incorporates the calculation of the objective prosody (pitch shape, phoneme durations),[4] which is then forced on the yield discourse the software for text to speech.

The primary discourse framework incorporated into a working framework that transported in amount was Apple Computer's MacInTalk. The product was authorized from outsider engineers Joseph Katz and Mark Barton (later, SoftVoice, Inc.) and was included during the 1984 presentation of the Macintosh PC. This January demo required 512 kilobytes of RAM memory. Accordingly, it couldn't keep running in the 128 kilobytes of RAM the primary Mac really delivered with.[55] So, the demo was cultivated with a text to speech software model 512k Mac, despite the fact that those in participation were not recounted this and the combination demo made impressive fervor for the Macintosh. In the mid 1990s Apple extended its capacities offering framework wide message to-discourse support. With the presentation of quicker PowerPC-based PCs they included higher quality voice testing. Apple likewise brought discourse acknowledgment into its frameworks which gave a liquid direction set. All the more as of late, Apple has included example based voices. Beginning as an oddity, the discourse arrangement of Apple Macintosh has developed into a software for text to speech completely bolstered program, PlainTalk, for individuals with vision issues. VoiceOver was just because included in 2005 in Mac OS X Tiger (10.4). During 10.4 (Tiger) and first arrivals of 10.5 (Leopard) there was just a single standard voice shipping with Mac OS X. Beginning with 10.6 (Snow Leopard), the client can pick out of a wide range rundown of different voices. VoiceOver voices include the taking of sensible sounding breaths between sentences, just as improved clearness at high read rates over PlainTalk. Macintosh OS X additionally incorporates state, an order line based application that changes over content to capable of being heard discourse. The AppleScript Standard Additions incorporates a state action word that enables a content to utilize any of the introduced voices and to control the pitch, talking rate and balance of the verbally expressed content.

The Apple iOS working framework utilized on the iPhone, iPad and iPod Touch utilizes VoiceOver discourse union for accessibility.[56] Some outsider applications likewise give discourse combination to encourage exploring, perusing pages or interpreting content.

The second working framework to highlight propelled discourse combination abilities was AmigaOS, presented in 1985. The voice combination was authorized by software for text to speech Commodore International from SoftVoice, Inc., who likewise built up the first MacinTalk content to-discourse framework. It highlighted a total arrangement of voice imitating for American English, with both male and female voices and "stress" pointer markers, made conceivable through the Amiga's sound chipset.[57] The combination framework was isolated into an interpreter library which changed over unlimited English content into a standard arrangement of phonetic codes and a storyteller gadget which actualized a formant model of discourse age.. AmigaOS likewise included an abnormal state "Speak Handler", which permitted order line clients to divert content yield to discourse. Discourse amalgamation was at times utilized in outsider projects, especially word processors and instructive programming. The blend programming remained generally unaltered from the first AmigaOS discharge and Commodore inevitably expelled discourse amalgamation support from AmigaOS 2.1 ahead.

In spite of the American English phoneme constraint, an informal variant with multilingual discourse union was created. This utilized an upgraded form of the interpreter library which could decipher various dialects, given a lot of guidelines for each language.[58]

Microsoft Windows

Present day Windows work area frameworks can utilize SAPI 4 and SAPI 5 parts to software for text to speech help discourse blend and discourse acknowledgment. SAPI 4.0 was accessible as a discretionary extra for Windows 95 and Windows 98. Windows 2000 included Narrator, a content to–discourse utility for individuals who have visual weakness. Outsider projects, for example, JAWS for Windows, Window-Eyes, Non-visual Desktop Access, Supernova and System Access can perform different content to software for text to speech-discourse undertakings, for example, perusing content so anyone might hear from a predetermined site, email account, content report, the Windows clipboard, the client's console composing, and so forth. Not all projects can utilize discourse combination directly.[59] Some projects can utilize modules, expansions or additional items to peruse message so anyone might hear. Outsider projects are accessible that can peruse content from the framework clipboard.

Microsoft Speech Server is a server-based bundle for voice amalgamation and acknowledgment. It is intended for system use with web applications and call focuses.

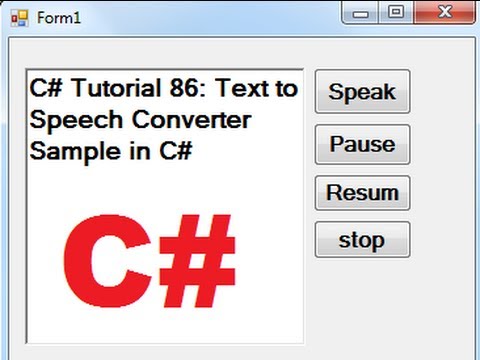

Content to-Speech (TTS) alludes to the capacity of PCs to text to speech software peruse message out loud. A TTS Engine changes over composed content to a phonemic portrayal, at that point changes over the phonemic portrayal to waveforms that can be yield as sound. TTS motors with various dialects text to speech software, vernaculars and particular vocabularies are accessible through outsider publishers.[60]

As of now, there are various applications, modules and devices that can peruse messages straightforwardly from an email customer and site pages from an internet browser or Google Toolbar. Some specific programming can describe RSS-channels. On one hand, online RSS-storytellers streamline data conveyance by enabling clients to tune in to their preferred news sources and to change over them to web recordings. Then again, on-line RSS-perusers are accessible on practically any PC associated with the Internet. Clients can download created sound records to compact gadgets, for example with an assistance of digital broadcast beneficiary, and hear them out while strolling, running or driving to work.

A developing field in Internet based TTS is electronic assistive innovation, for example 'Browsealoud' from a UK organization and Readspeaker. It can convey TTS usefulness to anybody (for reasons of openness, comfort, excitement or data) with access to an internet browser. The non-benefit venture Pediaphon was made in 2006 to give a comparable electronic TTS interface to the Wikipedia.[62]

Other work is being done with regards to the W3C through the W3C Audio Incubator Group with the contribution of The BBC and Google Inc.

Some open-source programming frameworks are accessible, for example,

Celebration Speech Synthesis System which uses diphone-based union, just as increasingly present day and better-sounding procedures.

eSpeak which supports a wide scope of dialects.

gnuspeech which uses articulatory synthesis[63] from the Free Software Foundation.

Others

Following the business disappointment of the equipment based Intellivoice, gaming designers sparingly utilized programming union in later games. An acclaimed model is the initial portrayal of Nintendo's Super Metroid game for the Super Nintendo Entertainment System. Prior frameworks from text to speech software Atari, for example, the Atari 5200 (Baseball) and the Atari 2600 (Quadrun and Open Sesame), additionally had games using programming blend.

Some digital book perusers, for example, the Amazon Kindle, Samsung E6, PocketBook eReader Pro, enTourage eDGe, and the Bebook Neo.

The BBC Micro consolidated the Texas Instruments TMS5220 discourse union chip,

A few models of Texas Instruments home PCs created in 1979 and 1981 (Texas Instruments TI-99/4 and TI-99/4A) were equipped for content to-phoneme blend or discussing total words and expressions (content to-lexicon), utilizing a famous Speech Synthesizer fringe. TI utilized an exclusive codec to implant total spoken expressions into applications, basically video games.[64]

IBM's OS/2 Warp 4 included VoiceType, an antecedent to IBM ViaVoice.

GPS Navigation units created by Garmin, Magellan, TomTom and others use discourse combination for car route.

Yamaha created a music synthesizer in 1999, the Yamaha FS1R which incorporated a Formant combination ability. Successions of up to 512 individual vowel and consonant formants could be put away and replayed, permitting short vocal ph

Combined discourse can be made by connecting bits of recorded discourse that are put away in a database. Frameworks vary in the size of the put away discourse units; a framework that stores telephones or diphones gives the biggest yield go, however may need clearness. For explicit use areas, the capacity of whole words or sentences takes into consideration brilliant yield. On the other hand, a synthesizer can join a model of the vocal tract and other human voice attributes to make a totally "manufactured" voice output.[2]

The nature of a discourse synthesizer is made a decision by text to speech software its likeness to the human voice and by its capacity to be seen plainly. A clear message to-discourse program permits individuals with visual impedances or perusing handicaps to tune in to composed words on a home PC. Numerous PC working frameworks have included discourse synthesizers since the mid 1990s.

A content to-discourse framework (or "motor") is made out of two parts:[3] a front-end and a back-end. The front-end has two noteworthy assignments. Initially, it changes over crude content containing images like numbers and shortened forms into what might be compared to worked out words. This procedure is regularly called content standardization, pre-preparing, or tokenization. The front-end at that point allots phonetic interpretations to each word, and partitions and denotes the content into prosodic units, similar to expressions, provisos, and sentences. The way toward allotting phonetic interpretations to words is called content to-phoneme or grapheme-to-phoneme change. Phonetic translations and prosody data together make up the emblematic etymological portrayal that is yield by the front-end. The back-end—frequently alluded to as the synthesizer—at that point changes over the emblematic semantic portrayal into sound. In specific frameworks, this part incorporates the calculation of the objective prosody (pitch shape, phoneme durations),[4] which is then forced on the yield discourse the software for text to speech.

The primary discourse framework incorporated into a working framework that transported in amount was Apple Computer's MacInTalk. The product was authorized from outsider engineers Joseph Katz and Mark Barton (later, SoftVoice, Inc.) and was included during the 1984 presentation of the Macintosh PC. This January demo required 512 kilobytes of RAM memory. Accordingly, it couldn't keep running in the 128 kilobytes of RAM the primary Mac really delivered with.[55] So, the demo was cultivated with a text to speech software model 512k Mac, despite the fact that those in participation were not recounted this and the combination demo made impressive fervor for the Macintosh. In the mid 1990s Apple extended its capacities offering framework wide message to-discourse support. With the presentation of quicker PowerPC-based PCs they included higher quality voice testing. Apple likewise brought discourse acknowledgment into its frameworks which gave a liquid direction set. All the more as of late, Apple has included example based voices. Beginning as an oddity, the discourse arrangement of Apple Macintosh has developed into a software for text to speech completely bolstered program, PlainTalk, for individuals with vision issues. VoiceOver was just because included in 2005 in Mac OS X Tiger (10.4). During 10.4 (Tiger) and first arrivals of 10.5 (Leopard) there was just a single standard voice shipping with Mac OS X. Beginning with 10.6 (Snow Leopard), the client can pick out of a wide range rundown of different voices. VoiceOver voices include the taking of sensible sounding breaths between sentences, just as improved clearness at high read rates over PlainTalk. Macintosh OS X additionally incorporates state, an order line based application that changes over content to capable of being heard discourse. The AppleScript Standard Additions incorporates a state action word that enables a content to utilize any of the introduced voices and to control the pitch, talking rate and balance of the verbally expressed content.

The Apple iOS working framework utilized on the iPhone, iPad and iPod Touch utilizes VoiceOver discourse union for accessibility.[56] Some outsider applications likewise give discourse combination to encourage exploring, perusing pages or interpreting content.

The second working framework to highlight propelled discourse combination abilities was AmigaOS, presented in 1985. The voice combination was authorized by software for text to speech Commodore International from SoftVoice, Inc., who likewise built up the first MacinTalk content to-discourse framework. It highlighted a total arrangement of voice imitating for American English, with both male and female voices and "stress" pointer markers, made conceivable through the Amiga's sound chipset.[57] The combination framework was isolated into an interpreter library which changed over unlimited English content into a standard arrangement of phonetic codes and a storyteller gadget which actualized a formant model of discourse age.. AmigaOS likewise included an abnormal state "Speak Handler", which permitted order line clients to divert content yield to discourse. Discourse amalgamation was at times utilized in outsider projects, especially word processors and instructive programming. The blend programming remained generally unaltered from the first AmigaOS discharge and Commodore inevitably expelled discourse amalgamation support from AmigaOS 2.1 ahead.

In spite of the American English phoneme constraint, an informal variant with multilingual discourse union was created. This utilized an upgraded form of the interpreter library which could decipher various dialects, given a lot of guidelines for each language.[58]

Microsoft Windows

Present day Windows work area frameworks can utilize SAPI 4 and SAPI 5 parts to software for text to speech help discourse blend and discourse acknowledgment. SAPI 4.0 was accessible as a discretionary extra for Windows 95 and Windows 98. Windows 2000 included Narrator, a content to–discourse utility for individuals who have visual weakness. Outsider projects, for example, JAWS for Windows, Window-Eyes, Non-visual Desktop Access, Supernova and System Access can perform different content to software for text to speech-discourse undertakings, for example, perusing content so anyone might hear from a predetermined site, email account, content report, the Windows clipboard, the client's console composing, and so forth. Not all projects can utilize discourse combination directly.[59] Some projects can utilize modules, expansions or additional items to peruse message so anyone might hear. Outsider projects are accessible that can peruse content from the framework clipboard.

Microsoft Speech Server is a server-based bundle for voice amalgamation and acknowledgment. It is intended for system use with web applications and call focuses.

Content to-Speech (TTS) alludes to the capacity of PCs to text to speech software peruse message out loud. A TTS Engine changes over composed content to a phonemic portrayal, at that point changes over the phonemic portrayal to waveforms that can be yield as sound. TTS motors with various dialects text to speech software, vernaculars and particular vocabularies are accessible through outsider publishers.[60]

As of now, there are various applications, modules and devices that can peruse messages straightforwardly from an email customer and site pages from an internet browser or Google Toolbar. Some specific programming can describe RSS-channels. On one hand, online RSS-storytellers streamline data conveyance by enabling clients to tune in to their preferred news sources and to change over them to web recordings. Then again, on-line RSS-perusers are accessible on practically any PC associated with the Internet. Clients can download created sound records to compact gadgets, for example with an assistance of digital broadcast beneficiary, and hear them out while strolling, running or driving to work.

A developing field in Internet based TTS is electronic assistive innovation, for example 'Browsealoud' from a UK organization and Readspeaker. It can convey TTS usefulness to anybody (for reasons of openness, comfort, excitement or data) with access to an internet browser. The non-benefit venture Pediaphon was made in 2006 to give a comparable electronic TTS interface to the Wikipedia.[62]

Other work is being done with regards to the W3C through the W3C Audio Incubator Group with the contribution of The BBC and Google Inc.

Some open-source programming frameworks are accessible, for example,

Celebration Speech Synthesis System which uses diphone-based union, just as increasingly present day and better-sounding procedures.

eSpeak which supports a wide scope of dialects.

gnuspeech which uses articulatory synthesis[63] from the Free Software Foundation.

Others

Following the business disappointment of the equipment based Intellivoice, gaming designers sparingly utilized programming union in later games. An acclaimed model is the initial portrayal of Nintendo's Super Metroid game for the Super Nintendo Entertainment System. Prior frameworks from text to speech software Atari, for example, the Atari 5200 (Baseball) and the Atari 2600 (Quadrun and Open Sesame), additionally had games using programming blend.

Some digital book perusers, for example, the Amazon Kindle, Samsung E6, PocketBook eReader Pro, enTourage eDGe, and the Bebook Neo.

The BBC Micro consolidated the Texas Instruments TMS5220 discourse union chip,

A few models of Texas Instruments home PCs created in 1979 and 1981 (Texas Instruments TI-99/4 and TI-99/4A) were equipped for content to-phoneme blend or discussing total words and expressions (content to-lexicon), utilizing a famous Speech Synthesizer fringe. TI utilized an exclusive codec to implant total spoken expressions into applications, basically video games.[64]

IBM's OS/2 Warp 4 included VoiceType, an antecedent to IBM ViaVoice.

GPS Navigation units created by Garmin, Magellan, TomTom and others use discourse combination for car route.

Yamaha created a music synthesizer in 1999, the Yamaha FS1R which incorporated a Formant combination ability. Successions of up to 512 individual vowel and consonant formants could be put away and replayed, permitting short vocal ph

RSS Feed

RSS Feed